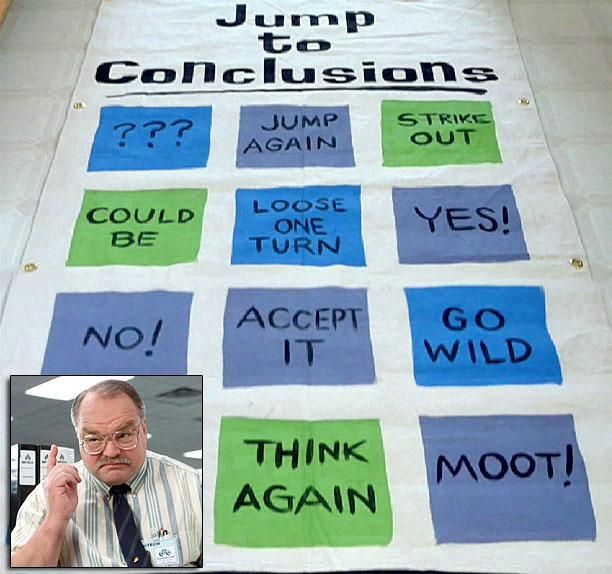

Scour the web and social media for reactions to OpenAI’s ChatGPT and you’ll notice a trend. Everyone’s now a futurist. The tech crystal ball has revealed to droves of people, regardless of background and experience a future where you can ask for technical content in the style of Shakespeare or even write college essays for you. People are crawling out of the woodwork, making wild predictions based on nothing more than the fact that of their surprise at the output. It conjures images of Tom Smykowski’s Jump To Conclusions Mat.

So, why does everyone believe they can now peer into the future after interacting with ChatGPT?

State of the Art

Let me say that the team at OpenAI did some fantastic work here. ChatGPT is legitimately cool research, and OpenAI is pushing the state-of-the-art forward and doing it in a public forum where people can evaluate the results. I can’t wait to see what the next iteration looks like. None of my observations is a reflection on the work they’ve done.

Manufacturing Futurists

There are two primary reasons ChatGPT turns people into futurists. The first is their surprise at the output, and the second is the accessibility of the demo. The first is fueled by the second.

Most people playing with the demo have never interacted with a Large Language Model (LLM) before. This lack of interaction means they don’t have a baseline for comparison of progress. A majority of the responses of the system will therefore be surprising. Surprise, in some cases, causes people to ignore the genuine failures in others.

The genius (or intelligence) in ChatGPT lies in the accessibility of its demo. Everyone can access and can play with the demo with no knowledge of programming, and it is all delivered on a simple web page. No complex decisions to make and no parameters to tune, just a blank input and your imagination. You have to love the simplicity.

I’ve seen wild claims that ChatGPT may be AGI or at least is Proto AGI. This is nonsense and ignores the system’s genuine failures when generalizing to the real world. I’m not an AGI researcher, but I can tell you we won’t get to AGI by chaining a series of LLMs together. Although, this should be a warning because if this is what AGI looks like, humanity is pretty screwed.

GPT-3.5 Isn’t A Single Model

The first thing to remember is that GPT-3.5, which is behind ChatGPT, isn’t a single model but a series of models.

Models referred to as “GPT 3.5”

GPT-3.5 series is a series of models that was trained on a blend of text and code from before Q4 2021. The following models are in the GPT-3.5 series:

code-davinci-002 is a base model, so good for pure code-completion tasks

text-davinci-002 is an InstructGPT model based on code-davinci-002

text-davinci-003 is an improvement on text-davinci-002This explains why ChatGPT can be both good at Shakespeare and Python programming.

Over Optimism

Over-optimism can lead to early adoption and implementation inside products, which can lead to devastating consequences. What people forget is that this is just a demo. Many of the surprises people had at the capabilities of ChatGPT are because of their questions. Even though the questions may seem complex, they are often in the confines of a narrow problem definition. Humans aren’t good at randomness or complexity. We often oversimplify scenarios and don’t account for real-world complexities.

“Give me a recipe for tomato soup in the style of Shakespeare.” We become in awe of the prose and ignore the quality of the recipe, which in this case, is technically what you were asking the system for.

Surprise leads us to gloss over the many, many failures that ChatGPT has. It even fails on simple tasks that revolve around keeping count of items, like this example from Elias Ruokanen.

Even in the domain of information security, I saw people raving about ChatGPTs capabilities in identifying vulnerabilities in code. Someone commented that it could be used to collect bug bounties in the cryptocurrency space, but it failed. In my own experiments, I often found that it misclassified issues making guesses, such as since a parameter was accepted, it was vulnerable to SQL Injection.

Speaking of code understanding, since ChatGPT could provide explanations of code, people thought they could use it to answer questions on Stack Overflow, with the predictable banning that followed.

In experimental systems like ChatGPT, Dall-E, etc., the cost of failure is next to zero. You don’t get that in the real world. In the real world, even simple tasks end up being far more complex than anticipated and failure is costly. Seemingly simple automation tasks even hide complexity. For example, MSN replaced journalists collating stories with an algorithm and published and even preferred fake stories about mermaids and Bigfoot.

Experts Downplaying Problems

The scary part here is that when it comes to cutting-edge research in this area, where a system can get things wrong and cause a large amount of harm in the real world, many experts don’t seem to think it’s a problem. They aren’t acknowledging the threats and issues.

Even incredibly smart people don’t seem to grasp the impact of the issues. This is concerning because some of the very same people are building this technology.

Below are some statements from Yann LeCun, Chief AI Scientist at Meta. The first shows a lack of understanding of how misinformation and disinformation spread on social platforms, and the second makes a stunning false equivalency.

As far as his comments on generative art, I wrote an entire article on this subject. This statement is a false equivalency.

Now, I have a lot of respect for Mr. LeCun, and he’s certainly not the only one spouting these opinions publicly. But I’m using his case as an example to make a point. Experts developing this technology should take feedback from professionals in adjacent disciplines to strengthen their systems, not pretend they are only a few tweaks away from utopia. What has been happening is people take criticism, feedback, and misuse as attacks, instead of the healthy criticism necessary to improve systems. This was especially true with Meta’s Galactica, where genuine dangers were played off as insignificant mischief.

When generalizing to the real world, many of these issues are deeper and more systemic than anticipated. We aren’t merely a couple of tweaks away from fixing these issues.

Issue Handling Strategies

Current issue-handling strategies for these types of systems meant to generalize to the real world are sub-optimal. They typically fall into two categories, trapping conditions and adding more models.

Manually trapping conditions for all the things you don’t want a system to do is not a realistic or sustainable way of handling issues, especially with something as generic as a language model. Yet, many feel this is the way to go and we can see it in the way the engineers handled issues with ChatGPT as the situation evolved. But, you leave the door open to the human imagination and manipulation. The possibilities seem endless and you can only write so many rules.

Complexity is both the enemy of security and safety. This is something engineers should keep in mind as they look to engineer protections for their systems. On that note, another strategy thrown around is to train additional models to detect specific issues from the model. So, now we end up with an army of models with the purpose of keeping other models and systems in check, creating an opaque landscape where the next surprise is just around the corner.

More research is being done in this area, but today the gaps are still large enough to drive a truck through.

What Can You Do?

Always keep in mind that technologies like ChatGPT are experimental technologies, and you shouldn’t throw them into production systems. Unfortunately, people are going to do it anyway. Below are a couple of things to keep in mind before considering an experimental technology in a real-world system.

What’s the cost of failure in your use case? If it’s higher than insignificant, don’t implement the technology because it will fail.

Analyze known failures and trigger conditions for those failures. Try to recreate them. If, at first, it seems they are fixed, try different methods to trigger the same result. You could bump up against some condition trapping, and bypassing it could be trivial.

Perform extensive testing, including a series of tests specifically to get the product to fail. Be realistic about the results.

In short, you have to know the impact of your system failing and the various ways to trigger failure conditions in your system. If the problem space is narrow enough, you may focus the technology and trap specific conditions. If the cost of failure is insignificant, then you have some breathing room to experiment, but your mileage will always vary.

Conclusion

With this post, I just wanted to make a couple of observations on ChatGPT. It really is legitimately cool research and it’s moving state-of-the-art forward. Genies don’t fit back into bottles, so it’s important that we take steps now to plan protections to mitigate harm. Experts developing this technology need to take concerns and feedback from professionals in adjacent disciplines to ensure existing harms are reduced and safer systems are created as a result. The next few years are going to be interesting for sure.

One response to “ChatGPT Generates Amateur Futurists”

[…] that people can’t possibly believe, but they are making them anyway. I wrote last year about how ChatGPT creates amateur futurists. Well, it seems the floodgates are […]